Forward

And so to Boston to act as steward sidekick to JP Rangaswami on stage at the Enterprise 2.0 conference. Where we tried something new, and where I ended up befuddling many in the largely besuited audience. Herein something of an apologia for my part in my downfall.

Forerunner

A couple of years ago JP encouraged Osmosoft to make something to accompany his talk at Le Web 3 Fourth Edition '07 — those numbers are important! Believe! Worried about the vagaries of Wi-Fi at large conferences we built the agenda into a TiddlyWiki and handed them out on USB sticks allowing audience members to make notes offline. Note takers were able to automatically share their thoughts using RSS as the interwebs wafted in and out across the hall. The project dubbed RippleRap evolved over a series of conferences and ended up being integrated with Confabb.com. JP's talk is online along with a transcript, but sadly the video with a blushing Phil Hawksworth demonstrating RippleRap in the background no longer seems to be resolving. Also tweets from then are unreachable from Twitter paging and search so in effect have fallen into a memory hole. C'est Le Web!

Foray

Daunting odds are stacked against any new project gaining traction during a conference, and whilst RippleRap chimed with a number of note takers, especially those already familiar with TiddlyWiki, the number who were willing to use an unfamiliar tool to record their experience wasn't likely to generate a network effect. I think we learned a lot from the RippleRap experience and the resultant set of plugins have been reused by the Osmosoft and the TiddlyWiki community in a number of other projects.

Foresight

So when JP approached us to consider a "RippleRap 2.0 for Enterprise 2.0", we were pretty clear in our constraints: we wanted to build something we could reuse elsewhere, something more interactive, and something which engaged an audience using their current preferred tools. How that should manifest itself led to some fairly wide ranging discussion which boiled down to a desire to increase JP's connection with the audience by capturing attention data either from people clicking on a local copy of the slides, or by tweeting hashtags for each part of the talk and then feeding that attention data back to the audience by highlighting areas on a map.

Formidable

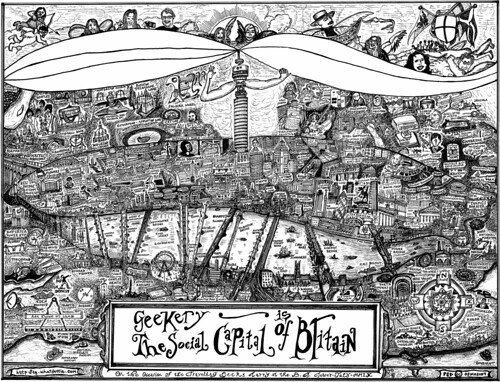

As we talked, we spiraled inextricability towards a single large zoomable Cecily landscape. The background would be one of my über-doodles. This wasn't without risk given prior experimentation with Cecily and large bitmaps or SVG versions of GITSCOB hadn't performed all that well

Forearmed

Then of course getting JP to close down a talk with enough time in advance upon which I could build a dedicated drawing was risky. Well that's unfair. JP is a just-in-time speaker; a constant deep thinker, he has a repertoire of ideas to assemble and his talks tend to form the evening before. Pinning him down a week or more in advance could be a little like trying to bottle Hooghly river mist.

Forecast

So to match JP's just-in-time style I undertook to text mine confused of calcutta, with the intention of illustrating each of the concepts and key phrases discovered by eye and N-Gram. We considered some automatic layout, such as treemapping or clustering but that wasn't making most of this being a performance. So during the talk I would find and draw attention to relevant doodles, resizing them based on relevance. At the end of the talk I'd zoom out to unveil a pictorial doodle-tag-cloud of the presentation.

For interaction with Twitter, we'd project tweets as growl notifications above the landscape and I'd curate interesting comments for JP to respond to. In essence, I would play the role of a visual jazz accompanist to JP's free-styling voice

Finally we'd host the visualization on TiddlySpace, a hosted version of our open source project. This was important not only as a useful deadline for a soft-launch of our service, but so others would able to build upon and resuse our code in their own presentations.

Foreshadow

There were several problems with this approach, not least that. days before the conference I was very unhappy with many of the doodles I'd come up with: they were essentially very clip-arty. Drawing on the move was problematic. My workflow for moving drawings from my Moleskine into SVG tiddlers fell apart without daylight or my trusty scanner, and the vagaries of hotel Wi-Fi meant uploading everything took much longer than one would hope.

Finally, and predictably, when we spoke the night before, I was excited to hear JP's outline, whilst simultaneously horrified that I didn't have that many drawings covering subjects raised by his talk!

Fortitude

I'd tried to test my laptop with the projectors the day before but the room was in the process of being built so it fell to the morning of the talk, and just before the kick-off for us to wonder on stage to check out the A/V. I plugged my laptop in and a brilliant and super-helpful techie popped up and confidently changed my display settings. He knew what he was doing. We were in safe hands. Phew!

But then I turned around to leave I watched, as if in slow motion, JP disappear over the edge of the stage. JP had tumbled a good couple of feet from the stage flat onto his back on the floor! He barely had more than a couple of minutes to dust himself down before returning up on stage, and unfalteringly walking straight into his talk. What a trooper!

For-FS!

But there was another problem: me. On stage I relaxed, sat back, listening and enjoying JP stride into his talk, waiting for my laptop to be projected on the large screen behind me. I ran Firebug, debugged and fixed a typeo in the code and waited. I composed a frustrated tweet, "why aren't they projecting me?" and waited. I gestured frantically at the screen behind, but couldn't spot my friendly techie's face or indeed see any faces in the audience due to the dark room and stong lighting. It was then that I dashed backstage to work out what was happening only to realise I was indeed projecting on sidescreens out of sight from the stage and of course, on the webcam, beamed to a large audience including my colleagues back at base. What a pillock!

Forsooth!

By rights I should have realised what was going on from the Twitter stream, but that was frankly overwhelming. JP is highly quotable, and the audience were clearly enjoying quoting his talk as tweets. Then those tweets were being retweeted. A lot.

I'd say there were upper hundreds of tweets during the 15 minutes we were on stage, and then a sustained 1000 tweets an hour being generated containg the hashtag "e2conf" for the duration of the conference. When I later sat down and make sense of it all, amongst the tweets which included "Rangaswami" or "@jobsworth" and which hadn't fallen off the back of Twitter search, I spotted:

- "Design for Loss of Control" x31

- "Empower the edge" x12

- "Get out of the way" x80

- "It took IBM 40 years to become evil, Microsoft 20, Google 10, Facebook 5 and Twitter 2.5" x38

- "Your worth is not what you have done, but what you can do" x23

- "I have a better credit rating than most of the banks I know" x7

- "The joke is if you're on second life, you don't have a first one" x9

- "Never have I seen the ROI of a restroom" x19

- "Promote abundance not scarcity" x12

- "Are these tools making us dumb? We may be losing individual skills but are developing collective skills" x35

- "Firewalls are like cheese, full of holes" x7

- "My father had 1 job, I've had 7, my son will have 7, at the same time" x9

- "Many of the companies I worked for no longer exist. Without social networks I don't exist" x7

- And so on ..

That bug I'd treated the audience to fixing turned out to be rather esential: a keyboard shortcut to clear the display of tweets!

Forthrightness

My troubles with the projector didn't go unnoticed and there was some fair criticism:

"Confused of Calcutta" maybe - I'm confused of Boston right now - hard to follow on-screen @jobsworth #e2conf

— Nigel Fenwick

Live use of back-channel highlights how confusing dynamic presentation is 4 the audience via Tweet onscreen @jobsworth #e2conf LOL

— Nigel Fenwick

Good opening speech by @jobsworth but nothing added by distracting images #e2conf

— Nigel Fenwick

#e2conf #keynote not sure what the other guy on stage is projecting on screen while @jobsworth talks, but its very distracting

— Kapil Qupta

But it wasn't all bad, there were some hopeful comments:

@jobsworth has his own DJ... awesome.

— Megan Murray

@jobsworth great content side screens drive focus back to him #e2conf 'design for loss of control' and boundaryless ... #e2conf

— jholston

@jobsworth is enjoying the Twitter screen backchannel while he's talking #e20conf

— Andrea Meyer

He illustrates an instance of the kinds of changes we are seeing by being onstage “playing the instrument that is his voice, while [a colleague] plays an instrument called the screen.†(The screen images were distracting, but then it’s possible that it is I who is not yet fully capable of living in this new world.)

— Patti Anklam

Forbearance

As if my being unprepared for the tweets wasn't bad enough, my plan to build a landscape fell apart. At one point JP mentioned the Twitter backchannel, and I brought up his post on the Hecklebot. Unfortunately the act of searching the TiddlyWiki cleared the story, and I was left to rebuild the doodle tag cloud from scratch. Ach!

Forensics

So there were some simple things which would have made things better.

Looking at tweets when I could, revealed that for the most part members of the audience were generating en mass, a chanting, unthinking echolalia of retweets. I should have anticipated and embraced the tweeting and retweeting to highlight parts of the talk which grabbed the audience.

During the talk, bringing mass retweeting to JP's attention wasn't all that useful. Every now and again someone out there would react or respond with an snarky aside or comment, often without the conference hashtag. What would have been cool would have been to be able to quickly find and bring interesting reactions from outside the room to the attention of the speaker and the room during the talk.

Highlighting individual tweets by bringing up a separate tab worked well. Given my time again, I would have moved the tweeting into a separate page and flipped between tabs, rather than put too much on the screen at once.

As for the visualization, avoiding a sea of clip art was a challenge, but it turned out the poor performance of Cecily was mostly because of gradients and drop shadows. Removing expensive text decoration sped things significantly and offered hope for some more complex zooming über-doodles.

For The Win!

So I think it's fair to say I didn't do a great job; mea culpa for not being on top of the room, or ahead with the sketching.

It's obvious JP's talks standup by themselves, and don't need slides or gimmicks but given his desire for greater interactivity, I think the idea of a ZUI blog explorer and a backchannel curating compère, able to bridge the gap betwixt speaker and audience may have merit.

I'm motivated to keep experimenting with and refining the visualization of confusedofcalcutta.com at confusedofcalcutta.tiddlyspace.com [it's large, a complete copy of the blog in a single TiddlyWiki so you'll need browser with good JavsScript and CSS3 support, Safari 4, Firefox 3.5 or Chrome work best].

I'll explain more about how to author your own version of this presentation tool and other ways you might find TiddlySpace useful in following posts.